Data Warehousing: Snowflake, Databricks, BigQuery & Cloud EDW

Enterprise Data Warehousing in 2026: Architectural Paradigms, Platform Selection, and Legacy Migration Strategies

The Macroeconomic and Technological Drivers of Enterprise Data Warehousing

The global enterprise data warehousing (EDW) ecosystem is currently undergoing a profound structural evolution, propelled by the exponential proliferation of digital information generated by 5G networks, interconnected Internet of Things (IoT) devices, and highly distributed enterprise applications. As of 2025, the EDW market valuation stands at a robust $25.47 billion, with detailed industry projections indicating an immediate expansion to $27.24 billion by 2026 and an ultimate, aggressive surge to $53.48 billion by the year 2035. This long-term trajectory represents a steady compound annual growth rate (CAGR) of 7.7% over the forecast period. North America currently dominates the global market, a position it is projected to maintain with a 33.5% market share by 2035, while the Asia Pacific region represents the fastest-growing geographical sector, driven by aggressive digital transformation initiatives in emerging economies.

This consistent financial expansion underscores a fundamental operational pivot within modern enterprises: the decisive transition from legacy, on-premises data silos to highly elastic, cloud-native data platforms. By 2026, cloud data warehouses have solidified their position as the unequivocal enterprise standard, shifting real-time data warehousing from a competitive luxury to a baseline operational necessity. The sheer velocity of modern commerce—specifically the logistics and warehousing sectors, which are heavily reliant on precise data infrastructure—demands analytics architectures capable of executing complex aggregations in milliseconds. For context regarding the scale of data generation, the broader general warehousing sector is projected to attain a market valuation of $563.09 billion by 2029. By 2026, the installation of approximately 4,281,585 commercial warehouse robots worldwide will completely transform how facilities operate, generating petabytes of telemetry data that must be ingested, normalized, and analyzed in real-time.

However, navigating this infrastructural transition introduces distinct technical friction. Organizations face unprecedented complexities in establishing seamless data integration across disparate sources, requiring the unification of unstructured social media ecosystems, highly relational customer relationship management (CRM) platforms, and rigid enterprise resource planning (ERP) systems without compromising strict security perimeters. Furthermore, a pronounced global shortage of skilled technical personnel acts as a severe market constraint, forcing enterprise data warehouse vendors to innovate rapidly toward low-code, fully automated solutions.

In direct response to these friction points, the industry is experiencing the rapid mainstream adoption of Data Warehouse as a Service (DWaaS) and automated data warehousing. Automation is aggressively reshaping data management protocols, directly addressing the engineering skills gap by autonomously generating data models, streamlining real-time data ingestion, instantiating specialized data marts, and enforcing governance controls without requiring manual oversight. Organizations successfully implementing these automated, cloud-native architectures report transformative business outcomes. Documented enterprise deployments demonstrate up to a 60% reduction in operational costs due to automated data management, a 30% increase in analytics team productivity through self-service reporting, and up to a 10% revenue increase directly attributable to superior market trend visibility and risk mitigation. To achieve these outcomes, the strategic selection of core data platforms and the sophisticated methodologies employed to migrate legacy data represent foundational decisions that will dictate an enterprise’s analytical maturity for the next decade.

The Architectural Schism: Snowflake versus Databricks

The contemporary modern data stack is largely defined by an intense duopoly at the compute and storage layer: Snowflake and Databricks. While both platforms operate as premier cloud-native data solutions, their foundational architectures, primary workload optimizations, and philosophical approaches to data management differ radically. The evaluation of these platforms extends far beyond a simple feature-parity comparison; it demands a deep strategic alignment between an organization’s internal engineering competencies, its artificial intelligence ambitions, and its overarching enterprise architecture.

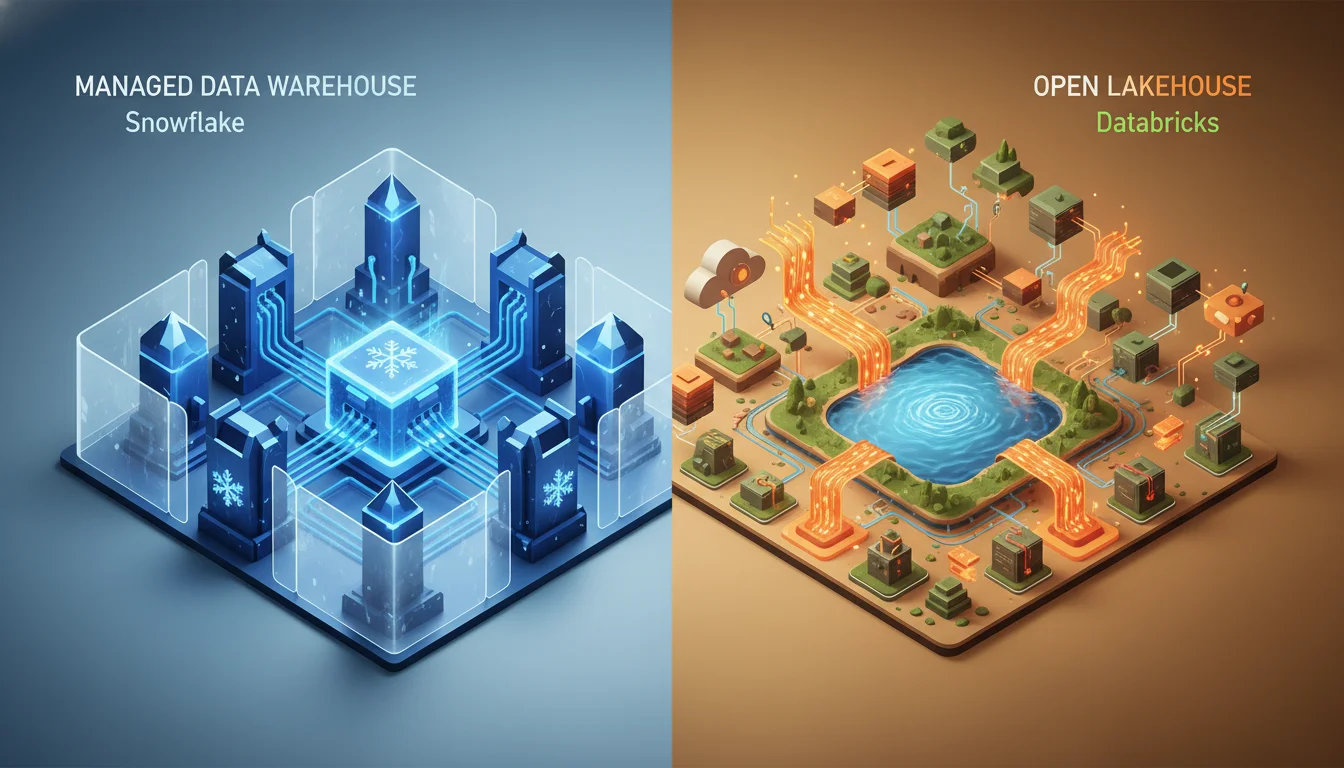

Fundamental Paradigms: The Managed Data Warehouse versus The Open Lakehouse

The defining divergence between the two platforms resides in their structural origins and storage philosophies. Snowflake was engineered natively for the cloud as a highly optimized data warehouse, prioritizing declarative SQL workflows, traditional business intelligence (BI), and strictly governed enterprise analytics. Its architecture utilizes a unique hybrid approach combining shared-disk and shared-nothing models, deliberately decoupled into three distinct tiers: a centralized Storage Layer, a Compute (Query Processing) Layer, and a Cloud Services Layer.

When data enters Snowflake, it is ingested, proprietary, compressed, and restructured into a highly optimized columnar format utilizing micro-partitions. This data is strictly managed entirely by Snowflake, which handles file size, structure, metadata, and background statistics autonomously. The Compute Layer relies on “Virtual Warehouses,” which are independent Massively Parallel Processing (MPP) compute clusters. Because these clusters operate on a “shared nothing” model with independent compute resources pointing to the central storage, workload isolation is absolute. Massive, resource-intensive data loading pipelines running in one virtual warehouse cannot mathematically degrade the performance of high-concurrency BI dashboards running in another. The Cloud Services layer acts as the centralized brain of the platform, seamlessly handling user authentication, infrastructure provisioning, query parsing, and access control. This design abstracts profound infrastructural complexity away from the user, delivering an “instant-on” experience characterized by near-zero operational maintenance.

Databricks, conversely, pioneered the “Lakehouse” paradigm, an architectural model specifically engineered to unify the low-cost flexibility and scale of unstructured data lakes with the reliability and transactional integrity of traditional relational data warehouses. Rooted deeply in the open-source Apache Spark ecosystem, Databricks relies fundamentally on Delta Lake technology. Delta Lake extends standard Parquet data files by appending a sophisticated file-based transaction log. This foundation enables true ACID (Atomicity, Consistency, Isolation, Durability) transactions, scalable metadata handling, and atomic updates directly on top of cheap cloud object storage (such as AWS S3 or Google Cloud Storage).

Rather than utilizing proprietary, locked-in storage formats, Databricks champions open data formats, ensuring that organizations can access their data using third-party compute engines, thereby aggressively mitigating the risk of vendor lock-in. To optimize query performance over these open files, Databricks employs techniques like Liquid Clustering for dynamic table organization, automated data skipping to reduce scanned files, and a VACUUM command to systematically remove unused data files and maintain optimal storage footprints. Its processing muscle is derived from a combination of highly distributed Spark computing and the proprietary Photon execution engine, which handles both massive batch processing and continuous streaming data with exceptional throughput. Databricks inherently provides granular control over compute clusters, allowing sophisticated data engineering teams to meticulously tune infrastructure for highly specific, resource-intensive pipelines, though this flexibility requires a much higher degree of technical expertise to manage effectively.

Advanced Analytics, Machine Learning, and Generative AI Ecosystems

As artificial intelligence shifts from experimental research to core operational infrastructure, the machine learning capabilities of the underlying data platform become critical differentiating factors. In this arena, Databricks has traditionally maintained a dominant market position, operating as a unified environment explicitly designed for the end-to-end machine learning and generative AI lifecycle.

Databricks natively incorporates pre-installed, distributed machine learning libraries alongside AutoML capabilities for automated hyperparameter tuning and model selection. It is tightly integrated with MLflow 3.0, an enterprise-grade framework for tracking experiments, registering models, and managing complex deployment lifecycles. Furthermore, Databricks has aggressively expanded into the generative AI space through its Mosaic AI Stack. This suite enables enterprises to construct sophisticated AI agents grounded securely in proprietary corporate data using Retrieval-Augmented Generation (RAG) architectures. Tools such as Vector Search (which creates indexes with real-time source data syncing), Agent Evaluation (utilizing AI-assisted judges for output quality), and custom LLM fine-tuning empower data science teams to deploy complex predictive models directly adjacent to the underlying data. Databricks Model Serving ensures unified deployment for both classical ML and modern LLM chains via highly available REST APIs.

The documented business outcomes of these Databricks AI implementations are profound.

Financial intelligence firm FactSet built a text-to-code knowledge agent system on Databricks, achieving a 44% improvement in the accuracy of natural language responses. The financial conglomerate Block developed an AI agent system to automate complex operations, resulting in $10 million in direct productivity gains. Similarly, the Intercontinental Exchange (ICE) deployed an agent system utilizing unique financial datasets to provide customer answers with 96% accuracy, while Comcast utilized the platform to boost viewer engagement through intelligent voice commands while simultaneously achieving a 10x reduction in overarching machine learning compute costs.

Snowflake, recognizing the immense strategic imperative of artificial intelligence, has rapidly expanded its capabilities beyond traditional SQL analytics. However, its approach is distinctly democratized, aiming to embed AI capabilities directly into the warehouse environment for use by standard analysts rather than solely catering to deep learning engineers. Through the Snowpark API, Snowflake allows developers to execute Python, Java, and Scala code securely within the data warehouse boundary. This facilitates robust in-database feature engineering and model inference without the security risks or latency associated with moving massive datasets to external processing clusters. Recently, Snowflake introduced Snowflake Cortex AI, which deploys fully managed, industry-leading large language models directly within the platform’s security perimeter. Features like Cortex Analyst and Snowflake Copilot allow data analysts to execute machine learning models, semantic vector searches, and LLM prompts utilizing familiar SQL syntax. While Databricks remains the premier choice for custom deep learning and complex ML engineering, Snowflake’s AI offerings are highly optimized for bringing applied AI directly to the SQL-fluent business user.

Data Governance, Security, and Decentralized Topologies

Enterprise data governance requires a delicate balance between democratized data access and rigorous, cryptographically secure enforcement. Snowflake’s governance is seamlessly orchestrated through its central Cloud Services Layer, utilizing a strict Role-Based Access Control (RBAC) model. Access rights are tied strictly to user roles rather than physical data locations, enabling incredibly granular, fine-grained access policies. Snowflake natively supports dynamic data masking to protect personally identifiable information (PII) and features enterprise-grade end-to-end encryption.

A highly unique governance feature of Snowflake is “Time Travel,” which allows users to query past states of a database to perform backfills or recover accidentally deleted data from up to 90 days in the past. Similarly, its “Zero-Copy Cloning” capability permits the instantaneous creation of database copies for testing environments without duplicating the underlying physical storage, saving immense infrastructural costs. Furthermore, Snowflake’s Secure Data Sharing architecture allows organizations to share live, governed data sets across disparate corporate accounts, external organizations, and even different cloud providers seamlessly, without manual data duplication or complex API engineering.

Databricks manages enterprise governance through the Unity Catalog, an overarching, unified governance solution designed to centralize access controls, detailed auditing, and data lineage tracking across the entire lakehouse. Crucially, Unity Catalog extends its governance parameters beyond simple tabular permissions; it actively governs machine learning models, BI dashboards, and unstructured files, enforcing strict guardrails across all enterprise AI initiatives. Databricks’ E2 architecture ensures that nodes operate exclusively with private IP addresses through secure cluster connectivity, supporting customer-managed keys (KMS) for highly regulated compliance environments. Through Delta Sharing, Databricks provides an open protocol for secure data sharing across completely disparate computing platforms, directly challenging Snowflake’s proprietary sharing ecosystem by allowing organizations to share data without requiring the recipient to run Databricks.

The architectural flexibility of these platforms heavily influences the implementation of modern organizational topologies, specifically Data Mesh and Data Fabric frameworks. Traditional, centralized data warehousing frequently struggles to scale across massive global conglomerates due to central engineering bottlenecks. Data Mesh advocates for domain-driven, decentralized data ownership, while Data Fabric relies on an integrated layer of data and connecting processes utilizing metadata. By 2026, organizations are increasingly adopting hybrid approaches. A highly effective recommended structure involves identifying core, highly regulated data domains (such as financial transactions or customer master data) for centralized Data Fabric treatment, while allowing analytical initiatives to operate as decentralized Data Mesh nodes. Tools like Promethium’s AI Insights Fabric act as an overlay, delivering centralized governance with distributed access. This zero-copy federation approach allows a central platform to enforce governance at the query level while distributed teams operate autonomously across Databricks and Snowflake environments, retrieving context without initiating redundant data movement.

Pipeline Orchestration: Dynamic Tables versus Delta Live Tables

Automating data transformation pipelines is a core function of the modern data platform, effectively neutralizing the need for complex, external orchestration tools in many scenarios. Both providers offer native, highly sophisticated declarative frameworks for building continuous data pipelines: Snowflake Dynamic Tables and Databricks Delta Live Tables (DLT).

Snowflake Dynamic Tables allow analytics engineers to simply define the desired end state of a data pipeline using standard SQL syntax. Snowflake’s internal engine then automatically calculates and manages the complex dependency graphs required to materialize that data, handling incremental data refreshes autonomously. Because these tables utilize Snowflake’s virtual warehouses, administrators can precisely assign compute resources (via T-shirt sizing like X-Small, Large, etc.) to specific transformation layers entirely independently. Crucially, because Dynamic Tables leverage Snowflake’s true “serverless” instant-on compute infrastructure, the warehouse initializes in less than a second, billing strictly for the exact seconds consumed during the pipeline run. However, ingesting continuous streaming data into Dynamic Tables often requires the manual configuration of Snowflake Streams, an object that tracks data manipulation language changes in a source table, which necessitates more manual engineering oversight.

Conversely, Databricks Delta Live Tables provides a comprehensive, declarative framework for building both complex batch and high-throughput streaming pipelines utilizing either SQL or Python. DLT inherently understands data quality, allowing data engineers to establish strict data expectations (guardrails) that automatically drop, quarantine, or gracefully fail invalid incoming records, ensuring downstream analytical integrity. Furthermore, DLT natively integrates with Databricks Auto Loader, an automated mechanism that drastically simplifies the continuous ingestion of raw files arriving in cloud storage without requiring complex state management. However, a significant architectural distinction lies in the compute instantiation. DLT pipelines historically rely on Spark clusters. In standard “serverless” mode, these Spark clusters can take up to ten minutes to fully initialize, creating problematic latency for near-real-time requirements. To achieve strict service level agreements (SLAs), users must manually enable “Performance-Optimized” mode, which dramatically reduces cluster startup times to 30–60 seconds, though this incurs a substantial premium compute cost over the standard mode.

Total Cost of Ownership (TCO) and Financial Operations (FinOps)

The financial mechanics of both platforms rely on highly complex, consumption-based pricing models, making direct Total Cost of Ownership (TCO) comparisons highly variable and heavily dependent on specific workload execution patterns. The 2026 enterprise landscape requires mature FinOps practices to prevent severe budget overruns on either platform.

Snowflake Pricing Mechanics: Snowflake monetizes through strictly separated storage and compute metrics. Storage is generally billed at a highly predictable flat rate, approximately $23 per terabyte per month. Compute is monetized via “Snowflake Credits,” which are consumed continuously whenever a virtual warehouse is active. The monetary dollar value of a single credit depends heavily on the selected architectural tier:

| Snowflake Service Edition | Targeted Enterprise Use Case | Starting Price per Credit (USD) |

|---|---|---|

| Standard Edition | Core foundational functionality, unstructured data, basic analytics | $2.00 |

| Enterprise Edition | Large-scale initiatives, multi-cluster warehouses, up to 90 days Time Travel | $3.00 |

| Business Critical | Highly regulated industries, requiring HIPAA, PCI compliance, private networking | $4.00 |

| Virtual Private Snowflake | Fully isolated network environments for supreme physical security | Custom Pricing |

Data derived from standard industry pricing guides. Note: Regional data center variances (e.g., London vs.

US East) frequently impose a 10–30% premium on underlying infrastructure costs. A standard medium-sized virtual warehouse consumes precisely 4 credits per hour. Therefore, operating a medium warehouse on the Enterprise tier costs exactly $12.00 per hour of active compute execution. Because Snowflake automatically and instantaneously suspends warehouses when queries finish, and resumes them instantaneously upon the next request, organizations theoretically only pay for active processing time. However, Snowflake’s automated optimizations, minimal required performance tuning, and fully managed infrastructural model carry a heavily documented “simplicity premium”.

Databricks Pricing Mechanics

Databricks prices its platform utilizing Databricks Units (DBUs), a metric that encapsulates the total use of CPU, memory, and I/O resources. Crucially, this DBU cost is layered on top of the underlying cloud provider’s infrastructural costs (e.g., the cost of the AWS EC2 instances physically running the cluster). DBU rates fluctuate dramatically based on the specific workload type, the platform tier (Standard vs. Premium), and the deployment region.

Jobs Compute: Automated orchestration and rigid batch pipelines. Standard Tier (per DBU): ~$0.07, Premium Tier (per DBU): ~$0.15

SQL Compute: BI reporting and structured SQL analytics. Standard Tier (per DBU): ~$0.22, Premium Tier (per DBU): ~$0.22

All-Purpose Compute: Interactive notebooks and exploratory data science. Standard Tier (per DBU): ~$0.40, Premium Tier (per DBU): ~$0.55

Serverless SQL: Fully managed SQL endpoints requiring zero cluster sizing. Standard Tier (per DBU): N/A, Premium Tier (per DBU): ~$0.75

Data reflects approximate DBU costs deployed on AWS infrastructure. GCP and Azure costs vary due to distinct native storage mechanisms.

The profound granular control offered by Databricks allows for aggressive, sophisticated cost optimization. By utilizing highly discounted ephemeral spot instances for background Jobs Compute ($0.07/DBU), large data teams can execute massive ETL processing tasks at a fraction of the cost of managed warehouses. Organizations frequently report 20-40% higher costs when running raw, brute-force data engineering workloads on Snowflake compared to a meticulously optimized Databricks deployment. Conversely, the primary risk of Databricks is configuration failure; poorly configured Spark clusters that fail to automatically terminate or that utilize excessively large instances can result in catastrophic budget overruns.

For purely analytical SQL workloads, independent benchmarks frequently position Snowflake as highly cost-effective, delivering rapid response times that minimize the total active compute duration, thus yielding a lower TCO for BI workloads. Migrations between these specific clouds can also yield savings if appropriately optimized; for example, media conglomerate Business Insider partnered with DAS42 to migrate heavily siloed legacy systems into Snowflake, ultimately driving a 23% month-over-month spend reduction through aggressive warehouse tuning.

Decision Framework for Enterprise Data Architects

In 2026, selecting between Snowflake and Databricks is fundamentally an exercise in assessing current engineering talent and prioritizing primary workloads. The philosophical gap between the platforms is actively narrowing—Snowflake is aggressively expanding into Python, machine learning workflows, and open table formats like Iceberg, while Databricks is vastly improving its SQL analytics endpoints and serverless compute capabilities to attract BI analysts.

Snowflake represents the optimal architectural choice when:

- The organization’s primary operational mandate revolves around governed business intelligence, highly concurrent executive dashboards, and declarative SQL analytics.

- The enterprise seeks to entirely minimize infrastructural management overhead, preferring to pay a slight premium for a “fully managed,” self-optimizing operational philosophy.

- Secure, cross-organizational data sharing across diverse cloud environments is a critical, revenue-generating operational requirement.

- The internal engineering team’s core competency lies heavily in SQL and analytics engineering rather than complex distributed systems programming in Scala or Python.

Databricks represents the optimal architectural choice when:

- The enterprise heavily prioritizes the continuous development of complex, production-grade machine learning models and highly customized Generative AI applications.

- The platform must ingest, process, and transform massive volumes of unstructured data (video, audio, raw text) or execute continuous streaming data pipelines with extreme throughput.

- The organization strictly mandates the use of open table formats (Delta Lake, Iceberg) and an open lakehouse architecture to systematically mitigate the risk of vendor lock-in.

- Data science teams require deeply collaborative, multi-language notebook environments utilizing Python, R, and Scala for deep, exploratory data science.

Industry analysts such as Gartner and Forrester routinely position both platforms as definitive “Leaders” in their respective Magic Quadrant and Wave reports, acknowledging Databricks’ supremacy in Data Science and Machine Learning Platforms and Snowflake’s dominance in Cloud Database Management Systems. Ultimately, the market positioning reveals the strategic reality: Databricks dominates AI/ML workloads with an 8.67% market share and staggering 57% year-over-year growth, while Snowflake commands the largest enterprise warehousing base, holding an 18.33% market share valued at $3.8 billion.

Modernizing Legacy Infrastructure: Strategic Migration to Google BigQuery

While the choice of the ultimate target cloud platform defines an enterprise’s future capabilities, the actual transition from legacy, on-premises systems (e.g., Teradata, Netezza, Oracle, or legacy SAP environments) represents a period of extreme operational friction and risk. Legacy data warehouses inherently suffer from escalating maintenance costs, inflexible vertical scaling limits, an inability to natively process unstructured data, and a profound lack of compatibility with modern machine learning frameworks.

Migrating to a truly serverless, cloud-native platform like Google BigQuery enables the absolute decoupling of storage and compute, multi-modal data processing, and seamless, integrated access to the broader Google Cloud ecosystem. However, enterprise migrations are rarely simple lift-and-shift operations; they encompass the algorithmic translation of proprietary SQL dialects, the complex restructuring of historical database schemas, and the high-fidelity synchronization of massive datasets during the production cutover phase. Legacy systems introduce unique formatting anomalies that must be rectified during migration, such as the necessity to trim empty spaces from legacy fixed-length CHAR columns, strip leading zeros that break numerical aggregations, manage highly specific floating-point precision issues disguised as strings, and harmonize disparate time zone offsets.

Google Cloud Native Migration Ecosystem

To systematically dismantle these legacy dependencies without interrupting ongoing business operations, Google Cloud provides a comprehensive, end-to-end suite of native tools housed within the overarching BigQuery Migration Service (BQMS). This framework formally structures the massive migration lifecycle into discrete, highly manageable iterations, drastically reducing downtime and the risk of data loss.

BigQuery Migration Assessment

The migration methodology begins with an exhaustive, data-driven assessment phase. The BigQuery Migration Assessment tool operates by requiring the extraction of highly specific metadata and historical query execution logs from the legacy source database. For instance, when migrating from an on-premises Teradata environment, system administrators execute the dwh-migration-dumper utility to securely pull historical logs directly from critical system tables such as dbc.QryLogV (for queries), dbc.DBQLUtilityTbl (for utility processes), and dbc.ResUsageScpu (for exact resource consumption).

Once this telemetry is uploaded to a secure, private Cloud Storage bucket (utilizing the –pap flag to enforce public access prevention), the BQMS generates a highly comprehensive Looker Studio report detailing the exact “steady state” of the existing legacy environment. This assessment fundamentally acts as a powerful infrastructure optimization engine. It explicitly identifies “Tables With No Usage” and “Tables With No Writes,” allowing data architects to surgically deprecate obsolete data pipelines and orphaned tables rather than blindly migrating decades of technical debt into the cloud. Furthermore, the assessment analyzes historical query runtimes and complex join conditions to automatically recommend BigQuery-specific optimizations. For example, it identifies tables projecting over 10,000 partitions and actively recommends applying fine-grained clustering strategies to optimize future query performance and reduce cloud spend. Additionally, the Google Cloud Migration Center can ingest these exact environment details to generate highly precise cost estimations for running the modernized workloads in BigQuery.

AI-Enhanced SQL Translation Services

Historically, the most prohibitive financial and temporal barrier to database migration is the manual refactoring of thousands of proprietary SQL scripts, legacy stored procedures, and complex Data Definition Language statements.

SQL Translation Service

The BQMS neutralizes this barrier through a highly sophisticated SQL Translation Service capable of algorithmically converting over a dozen distinct proprietary dialects—including Teradata BTEQ, Amazon Redshift SQL, Oracle PL/SQL, Apache HiveQL, and even Snowflake SQL—into standard GoogleSQL.

This translation is facilitated through two primary operational mechanisms:

Batch SQL Translator

Designed exclusively for bulk script migration, this tool parses massive repositories of source code simultaneously. Crucially, the batch translator generates a specific translation configuration ID that persistently stores deep object name mapping rules and complex schema search paths, ensuring that structural schema name changes are applied uniformly and consistently across thousands of translated files.

Interactive SQL Translator

This web-based interface allows engineers to debug, refine, and translate individual queries in real-time, applying the same configuration IDs generated by the batch process to ensure absolute consistency.

By 2026, both of these translation services are heavily enhanced by Google’s Gemini Large Language Model. If a batch translation inherently fails due to complex errors such as a RelationNotFound or because the translator cannot statically determine the input data types of a User-Defined Function (UDF) written in C, Gemini analyzes the failure and provides context-aware suggested fixes within the log interface. This allows engineers to apply intelligent, AI-generated corrections instantly, dramatically accelerating the refactoring of incredibly complex operational logic.

Data Validation Tool (DVT)

Ensuring absolute, cryptographically verified data fidelity between the legacy source and the newly instantiated BigQuery environment is paramount. The open-source Data Validation Tool (DVT), an advanced Python-based command-line interface utilizing the Ibis framework, entirely automates this verification process. DVT executes homogeneous and heterogeneous database comparisons through several distinct vectors :

- Column Validations: The tool rapidly computes broad, high-level aggregations (–count, –sum, –min, –max, –std) across all specified numeric or string columns on both the source database and BigQuery simultaneously, instantly flagging any statistical variance.

- Row Validations: For absolute precision, DVT calculates deep cryptographic checksum hashes across entire concatenated rows. This mechanism casts all columns to strings, sanitizes them via IFNULL and RTRIM commands, concatenates the resulting string, hashes it using SHA256, and compares the resulting target hash versus the source hash. This instantly identifies minute, invisible discrepancies in data types or precision (e.g., floating-point misrepresentations or legacy trailing spaces in CHAR columns). Because disparate database engines inherently handle null values and string padding differently, DVT supports customized YAML configurations that define “Calculated Fields.” This allows engineers to inject dynamic Ibis expression functions directly into the validation query, ensuring that expected systemic differences do not trigger false positive validation failures.

Data Migration Tool (DMT) and Orchestration Frameworks

The physical movement of data is orchestrated via the BigQuery Data Transfer Service (DTS). DTS provides a fully managed, scheduled infrastructure for loading massive analytical datasets without requiring custom code or infrastructure provisioning. It supports massive historical data backfills and continuous incremental loads directly from cloud storage (S3, Azure Blob) and traditional operational databases.

For highly complex, enterprise-scale orchestrations—specifically migrations originating from Teradata or Apache Hive—architects routinely utilize the open-source Data Migration Tool (DMT). Hosted within the Google Cloud Platform repository, DMT acts as an overarching orchestration layer. It weaves together DTS (for data movement), the SQL Translation APIs (for script conversion), and DVT (for post-load validation) using sophisticated Cloud Composer (Apache Airflow V2) Directed Acyclic Graphs (DAGs). Utilizing event-based triggers via Cloud Pub/Sub and Cloud Run, DMT completely automates the end-to-end migration pipeline, automatically executing schema creation, validating execution dry-runs, and generating comprehensive Looker Studio dashboards to monitor migration successes and failure rates in real-time.

Navigating SAP ERP Migrations

Organizations running legacy SAP environments face highly specific migration challenges due to SAP’s incredibly complex, proprietary application layer data structures. The BigQuery Connector for SAP supports major in-maintenance enterprise applications, including SAP Business Suite 7, S/4HANA, and various NetWeaver-based applications. However, extracting data natively from the SAP application layer using traditional methods like SAP LT Replication Server (SLT) often introduces severe performance bottlenecks and complex security management overhead.

To overcome this, organizations frequently deploy specialized third-party replication tools like BryteFlow XL Ingest. BryteFlow bypasses standard coding requirements, offering real-time, no-code replication of massive SAP “Godzilla” tables directly into BigQuery. Crucially, it supports extraction from both the SAP application layer (utilizing CDS views, ODP, and standard Extractors) and directly from the underlying database layer, parsing complex SAP Pool and Cluster tables with automated structural mapping. This ensures that downstream BI tools—including SAP’s own BusinessObjects Web Intelligence—can seamlessly query the modernized BigQuery environment with native compatibility.

Evaluating Automated ELT Platforms for BigQuery Ingestion

While Google’s native services excel at the initial historical migration and bulk transfer, the long-term, continuous ingestion of data from highly diverse SaaS platforms, CRMs, and operational databases into BigQuery requires robust, dedicated Extract, Load, Transform (ELT) tooling. The industry shift from traditional ETL to ELT fundamentally represents a shift in compute economics; because cloud data warehouses like BigQuery offer near-infinite, scalable MPP compute, it is vastly more cost-effective to load raw data directly into the warehouse and perform transformations in-situ, rather than maintaining expensive, intermediate processing servers to transform data in transit.

The market features several elite automated platforms, each optimizing different operational variables.

Fivetran: Automated Schema Evolution and Change Data Capture

Fivetran is widely recognized as the premier fully managed, automated ELT pipeline solution. Its underlying architecture is explicitly designed to minimize ongoing engineering overhead through a philosophy of extreme, unbreakable automation. When extracting data from legacy operational databases (e.g., SQL Server, Oracle, PostgreSQL) into BigQuery, Fivetran utilizes advanced Change Data Capture protocols to identify and extract only the specifically modified rows, drastically minimizing ingestion latency and eliminating unnecessary compute load on the source systems.

A defining, proprietary advantage of Fivetran is its Teleport Sync capability. Traditional log-based CDC fundamentally requires deep, administrative-level access to the database’s internal transaction logs (e.g., the Write-Ahead Log in PostgreSQL or the transaction log via a DLL in SQL Server). Teleport Sync elegantly circumvents this requirement by executing a full table scan and calculating a highly compressed snapshot of row hashes entirely in memory. It then compares these in-memory hashes between sync intervals to instantly identify data modifications. This is an invaluable feature when administrative access to legacy transactional logs is strictly prohibited by infosec policies, or when legacy databases undergo aggressive TRUNCATE/LOAD operations that routinely invalidate standard change logs. However, Teleport Sync possesses a specific limitation: if a source table is actively modified during the execution of an ongoing sync interval, the snapshot calculation becomes invalid, forcing Fivetran to execute a full historical re-sync of the entire table to ensure absolute data consistency.

Furthermore, Fivetran enforces absolute, fully automated schema evolution. If an upstream SaaS application developer dynamically adds a new column, alters an existing data type, or executes a soft delete, Fivetran automatically detects the DDL change and flawlessly propagates the schema alteration directly into the BigQuery destination without generating alerts or requiring manual engineering intervention. Despite this supreme operational simplicity, the platform carries a distinct financial risk; Fivetran’s consumption-based, per-row pricing model can become prohibitively expensive for enterprises moving massive, continuous volumes of streaming data, frequently pushing monthly bills into uncomfortable territory as data volume scales.

Matillion: Cloud-Native Complex Transformations

While Fivetran focuses primarily on the pure “Extract” and “Load” phases (deliberately deferring the “Transform” phase to downstream, SQL-based tools like dbt), Matillion provides an end-to-end data integration environment explicitly built and optimized for cloud data warehouses like BigQuery. Matillion operates completely natively within the cloud ecosystem, providing visual, low-code transformation orchestration components alongside the ability to inject highly complex, raw SQL and Python scripts.

Matillion’s core architectural strength lies in its capitalization on “pushdown compute”. Rather than extracting massive datasets into its own proprietary servers for transformation, it issues highly optimized GoogleSQL commands directly to the BigQuery engine. This leverages BigQuery’s immense processing power to rapidly flatten highly complex nested Structs and arrays that are native to GCP architectures. Furthermore, Matillion integrates seamlessly with GCP Pub/Sub for native pipeline alerting and allows organizations to consolidate their platform billing directly through a unified Google Cloud invoice. The platform is exceptionally powerful for advanced data architecture teams requiring highly complex, mid-pipeline data modeling, though it undeniably demands a steeper initial learning curve and significantly more advanced architectural planning than a point-and-click tool like Fivetran.

Airbyte and the Open-Source Multi-Cloud Alternative

Airbyte has rapidly emerged as the dominant open-source alternative in the ELT landscape, boasting the absolute largest volume of pre-built source connectors in the integration industry. For vast organizations navigating complex multi-cloud environments (e.g., attempting to sync data simultaneously to Redshift in AWS and BigQuery in GCP following corporate acquisitions) or those utilizing highly niche, bespoke internal applications, Airbyte allows internal developers to rapidly engineer and customize their own connector scripts using standard Python.

Technically, Airbyte excels by supporting sub-5-minute CDC synchronization, comprehensive security certifications (SOC 2, HIPAA, GDPR), and native destination support for modern open table formats like Apache Iceberg. Because the core platform is open-source, highly secure enterprises can choose to deploy Airbyte entirely on their own self-managed, air-gapped Kubernetes infrastructure. This achieves maximum data sovereignty and cleanly circumvents the aggressive, volume-based pricing spikes associated with commercial SaaS providers. However, the actual total cost of ownership must carefully account for the substantial engineering overhead and personnel costs required to configure, monitor, maintain, and scale this open-source infrastructure across distributed geographic environments.

Specialized Integration Platforms and Streaming CDC

For highly specific data engineering scenarios, architects are increasingly adopting specialized platforms designed to overcome the limitations of the broad ELT giants:

- Streamkap and Estuary: As organizations outgrow batch-based synchronization (e.g., 15-minute intervals), platforms like Streamkap provide true real-time streaming CDC directly from operational databases, avoiding the per-row pricing penalties of Fivetran. Estuary offers a unique architecture that extracts data once from the source and “fans it out” to multiple distinct destinations (e.g., both BigQuery and Redshift simultaneously), completely avoiding duplicate API calls and severe cost blowups in multi-cloud architectures.

- Google Cloud Data Fusion: A visual, fully managed, cloud-native data integration service natively built into GCP. Data Fusion is exceptionally potent for hybrid environments where highly secure, on-premises legacy databases must interact with Google Cloud services, offering centralized pipeline management without requiring custom code. However, its batch-oriented design fundamentally struggles with continuous, low-latency CDC replication when compared to specialized streaming tools.

- Integrate.io & Hevo Data: Integrate.io provides a unique low-code environment capable of near-real-time (60-second) CDC while offering over 220 in-pipeline transformations, supporting complex operational ETL use cases that require data to be manipulated before it lands in BigQuery. Hevo Data provides automated schema drift handling and functional operational plumbing specifically optimized for real-time synchronization workflows without heavy engineering requirements.

- Talend (Qlik Data Integration): Positioned explicitly as a governance-first enterprise data fabric, Talend excels in highly regulated, compliance-heavy industries (like banking and healthcare) migrating massive legacy datasets. It provides deep programmatic data stewardship, robust API management, and centralized cataloging capabilities, making it the ideal choice for unified, highly governed enterprise pipelines servicing downstream visualization tools.

Synthesizing the Data Strategy

The comprehensive modernization of enterprise data warehousing in 2026 is definitively characterized by a massive infrastructural shift from rigid, monolithic legacy systems to highly scalable, autonomous, and artificially intelligent cloud platforms. The analysis of current market dynamics, technological capabilities, and migration methodologies yields several highly critical conclusions for enterprise data architects.

First, the strategic selection between Snowflake and Databricks should no longer be driven by basic feature-parity checklists, as the technological boundaries between both platforms continue to fiercely converge. Snowflake now supports Python and Iceberg, while Databricks aggressively optimizes its SQL analytics and serverless compute. Rather, the decision must be rooted deeply in the organization’s macro-level engineering talent pool and primary operational goals. Snowflake remains the unparalleled, pragmatic choice for organizations prioritizing strictly governed, high-concurrency business intelligence and a seamless, fully managed operational experience requiring minimal maintenance. Databricks remains the definitive platform for engineering-heavy enterprises seeking to build complex machine learning pipelines, execute highly customized generative AI architectures on unstructured data, and maintain absolute architectural control over open storage formats to prevent vendor lock-in.

Second, the actual execution of a migration from legacy infrastructure to platforms like Google BigQuery is fundamentally an exercise in deep infrastructure optimization, not merely automated data replication. Utilizing the native BigQuery Migration Assessment tool to rigorously analyze historical query logs ensures that decades of accumulated technical debt—unused tables, obsolete pipelines, and unoptimized joins—are systematically eliminated prior to cloud instantiation.

Furthermore, the seamless integration of Gemini-enhanced SQL translation APIs and automated, cryptographic hash-based Data Validation Tools meticulously mitigates the traditional, catastrophic risks associated with dialect conversion failures and data fidelity loss.

Finally, the long-term operational viability of any modernized cloud data warehouse is entirely dependent on the structural integrity of the ELT pipelines feeding it. Organizations must critically evaluate integration platforms based on ingestion velocity, schema volatility, and total cost of ownership at scale. While Fivetran offers unmatched, robust automation for rapidly changing upstream schemas, its compounding costs at massive data volumes frequently force mature organizations toward hybrid architectures. This involves combining managed services with open-source frameworks like Airbyte for basic replication, leveraging real-time streaming CDC platforms like Streamkap for sub-second latency, or deploying the complex, warehouse-native transformation capabilities of Matillion to leverage the raw pushdown compute power of the data warehouse itself.

Ultimately, the successful enterprise data strategy in 2026 demands the construction of a highly integrated data fabric where flexible, decoupled compute engines interact seamlessly with automated, zero-latency ingestion pipelines. By rigorously assessing highly specific workload requirements and aligning them mathematically with the appropriate architectural paradigms, enterprises can successfully transcend the crippling limitations of their legacy systems, establishing a resilient, secure foundation capable of capitalizing on the next generation of applied artificial intelligence and instantaneous real-time analytics.